Your screenshots know

more than you think

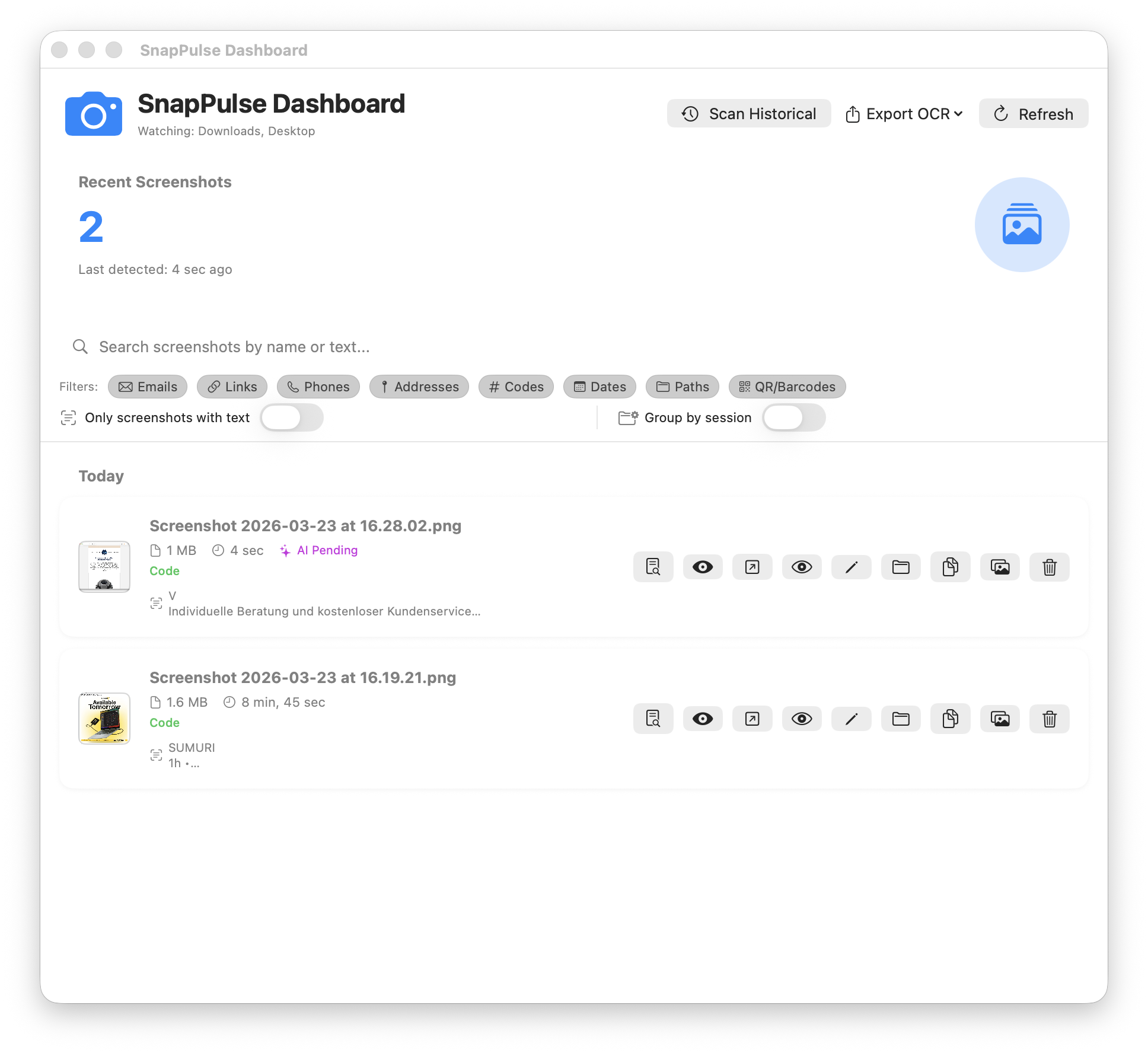

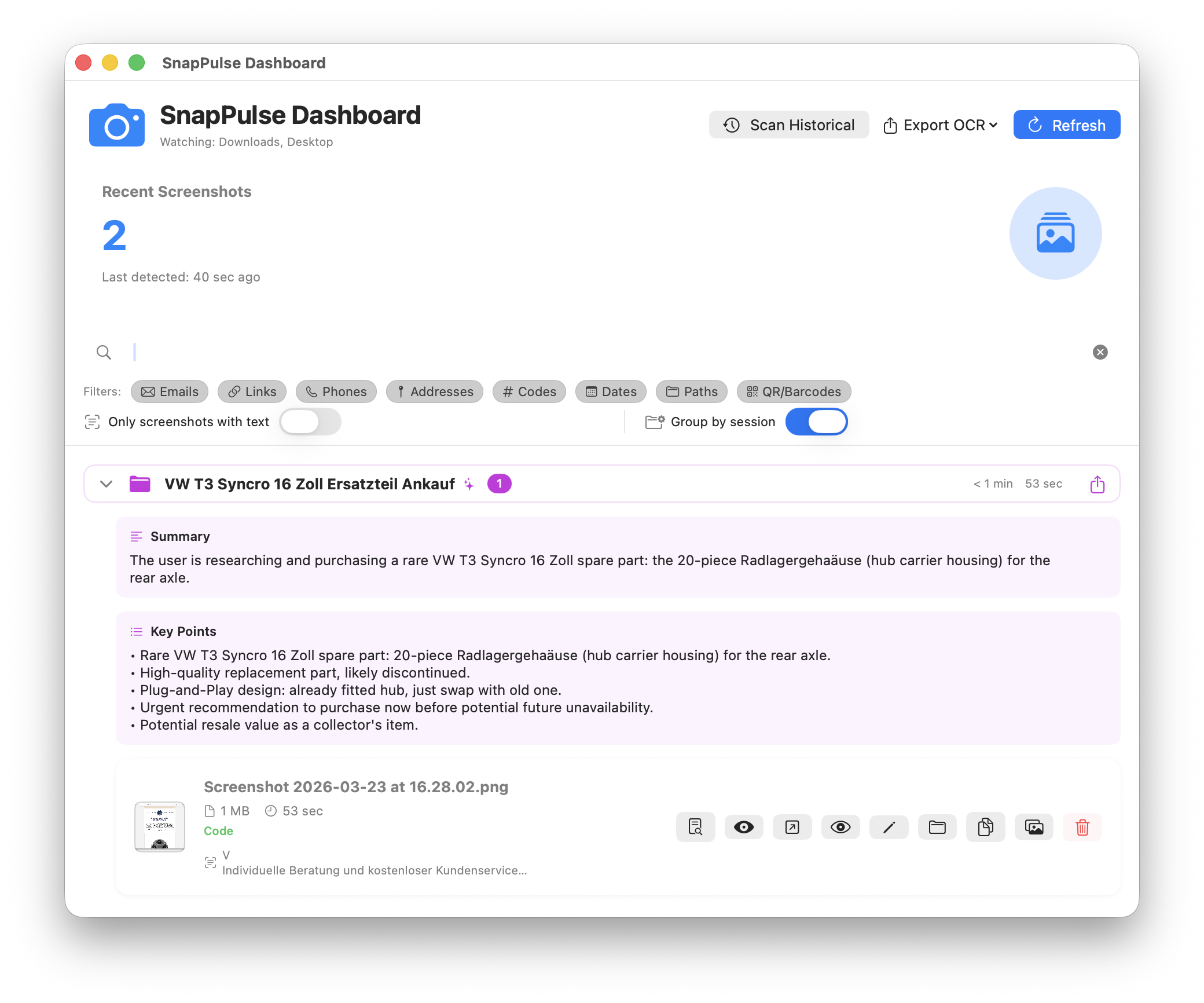

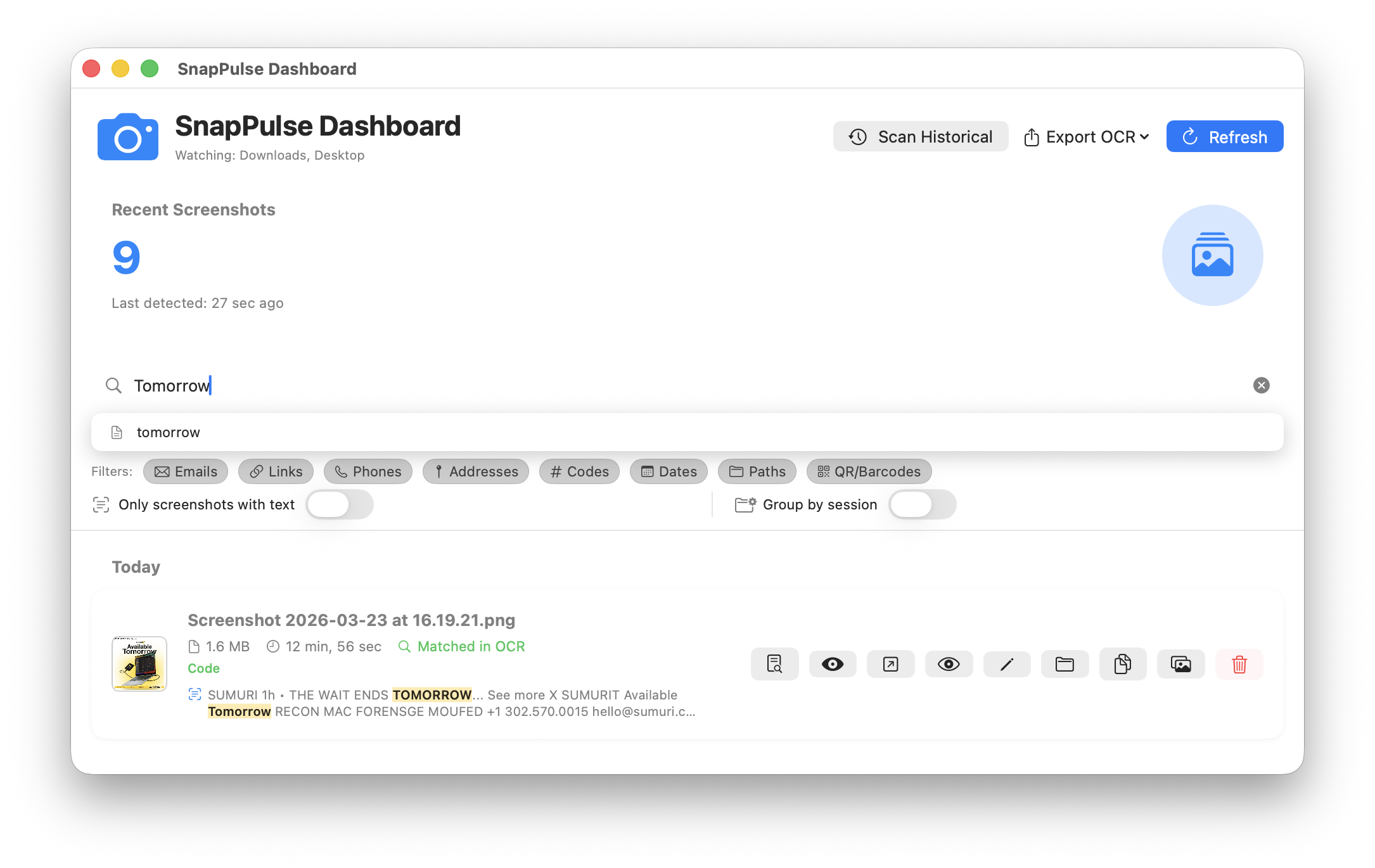

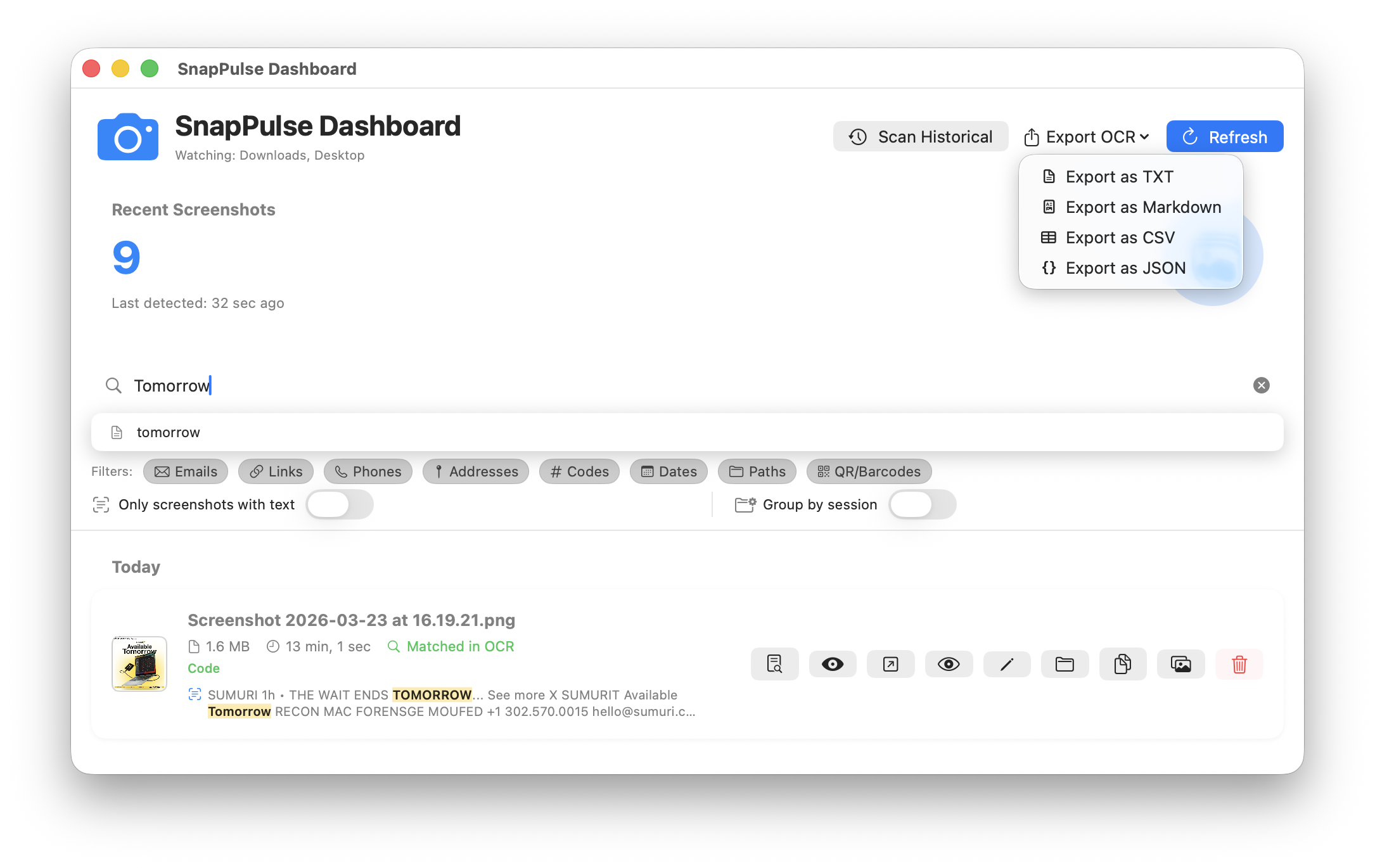

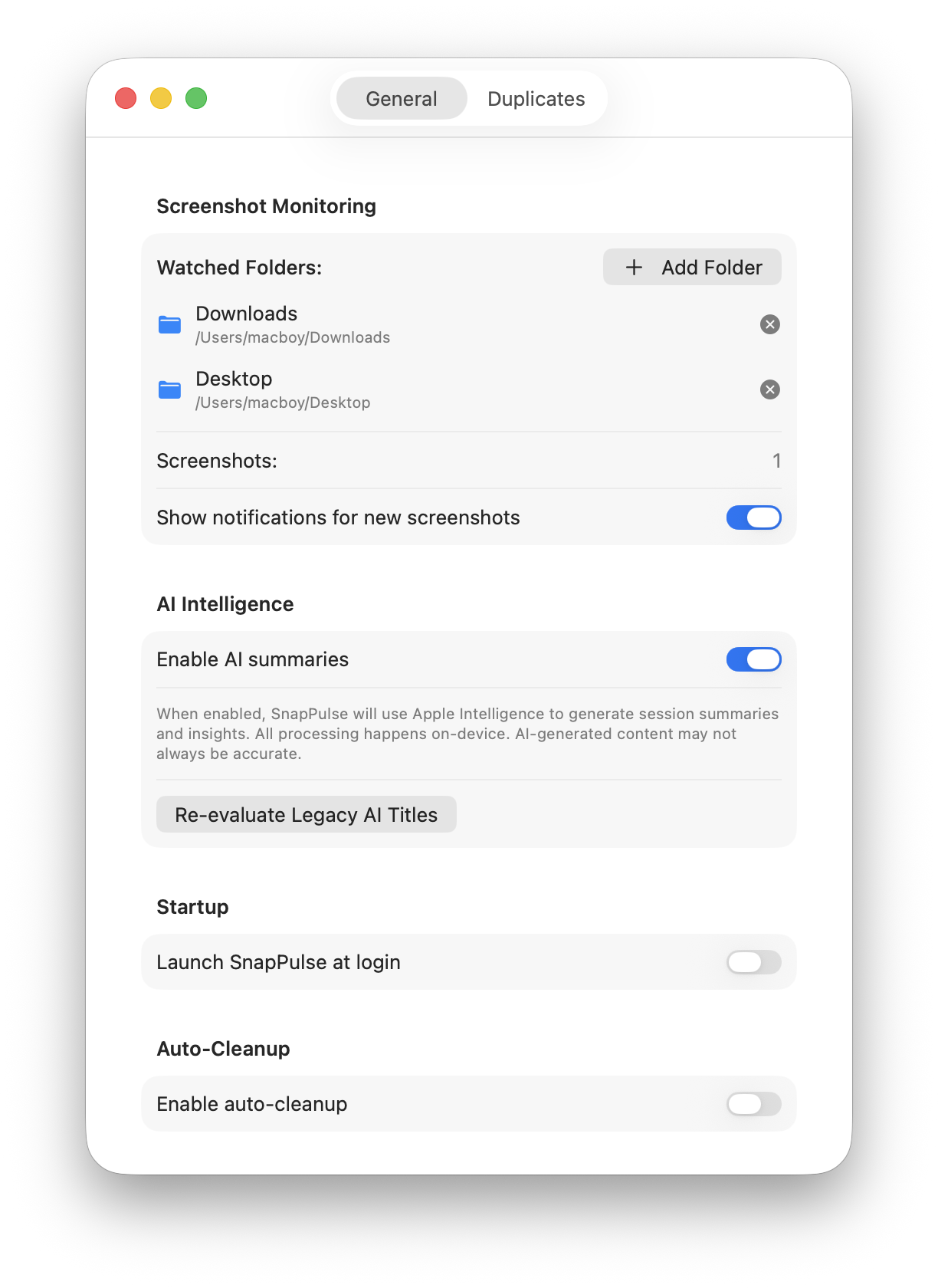

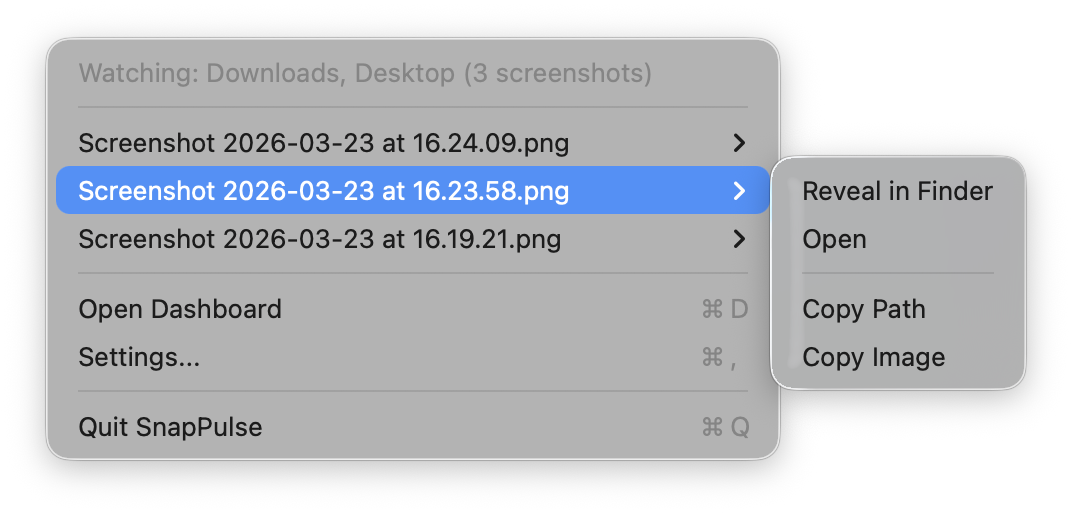

That screenshot you took five minutes ago has a meeting link, a confirmation code, and a phone number buried in it. SnapPulse finds them all automatically — using on-device OCR and Apple Intelligence — so you can act on them with a single click instead of squinting at pixels.